My name is “Suhan Kacholia.” Using those six simple syllables, my friends and family members are able to consistently refer to me, from childhood to birth. Su-han Ka-cholia. Thirteen letters contain my entire history, my personality, and everything else about me.

Names provide access to the world. Through the name “Tokyo,” I am able to reference a large collection of buildings and people thousands of miles away that I have never been to. Names allow me to discuss the lives of my friends, family, and Joe Biden and Kim Kardashian, despite having never met them.

It is kind of amazing names even exist. How do we learn to associate a short set of sounds with whole continents, individuals, and complex organizations? How do we so effortlessly set up that link between a label and an object, often knowing next-to-nothing about the object in question?

There is a whole sub-field of philosophy dedicated to these questions. I learned about this field this summer, when I took a course on philosopher Saul Kripke’s book Naming and Necessity. I also learned that somehow, we still lack a definitive answer to how naming actually works. All existing philosophical responses to the question are unsatisfying and result in inconsistency or paradox. I was astonished—how do we so poorly understand a process so common and integral to our humanity?

I tried thinking through the question myself, and I came up with an answer that satisfies me, aligns with evidence from other fields, and resolves paradoxes other theories encounter. Of course, I may be wrong, and probably, am wrong. I am a mere 19-year-old undergraduate, not an academic philosopher, and I’ve only thought about this topic for about a month. Still, I thought it was valuable to write out my views, and hope that others will point out flaws in my reasoning.

I’ll first provide a brief summary of existing theories of naming. This will obviously be an incomplete and shallow synopsis, and I encourage you to read the real papers and books to gain a more nuanced education—especially Naming and Necessity. Nevertheless, I believe it will provide all the context necessary to understanding why existing approaches are flawed, and why the alternative I later sketch out makes sense.

Philosopher John Stuart Mill believed that names carry no connotation—only denotation. In other words, names do not have informational content; they are arbitrary words meant to point out “that thing, over there.” Long Island in New York is, indeed, a long island. However, we can easily imagine a world in which there is a place called Long Island for arbitrary reasons—for all we know, Long Island could have been a tiny, landlocked city in Kansas called Long Island, and it would be weird, but still fine. The Holy Roman Empire was neither holy, Roman, or an empire; it doesn’t matter though, because the name is just picking out some entity.

However, Mill’s theory had issues.

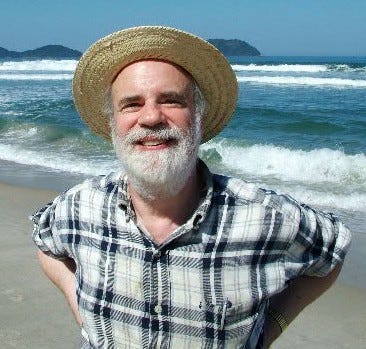

The Ancient Greeks used to call the star the saw in the morning Phosphorus, and the star they saw in the evening, Hesperus. Turns out, neither of them are stars, and are actually the same thing—the planet Venus.

If we accept Mill’s theory of reference, the following two sentences should convey the same thing:

Hesperus is Hesperus

Hesperus is Phosphorus

The object, after all, is the same in both cases. However, clearly, the second sentence conveys some information—namely, that the star we see in the morning is the same entity as the star we see in the evening. The first sentence, meanwhile, is something we can know a priori, or without any experience or evidence from the world. Of course Hesperus is Hesperus—you can say that without even knowing what Hesperus refers to.

This problem motivated the descriptivist theory of reference, developed most prominently by Gottlob Frege and Bertrand Russell. In this view, proper names are identical to descriptions (or a set of descriptions) of an object. For example, Aristotle is equivalent to “the greatest philosopher of late antiquity,” “the student of Plato,” and so on. Descriptivism argues that a name refers to an object when the speaker believes that the description uniquely picks out that object.

Using this theory, we can restate the sentence “Hesperus is Phosphorus” as “The star which appears in the morning is the same as the star which appears in the evening.” We are learning that the same object satisfies two distinct descriptions, which is new information.

The appeal of this theory shines in cases where a name might refer to multiple possible objects. For instance, imagine you have a friend named Joe Biden, who has a soccer game tonight. If you say, “Joe Biden is losing,” a mutual friend might ask you, “Which Joe Biden do you mean?” To elucidate the meaning of the sentence, you could replace “Joe Biden” with the description of whichever name you mean; for instance, “The President of the United States is losing.” This seems to indicate that the crux of reference is descriptive in nature.

However, descriptivism is also flawed. Saul Kripke, in Naming and Necessity, laid out three main criticisms of the theory that arguably, devastated it.

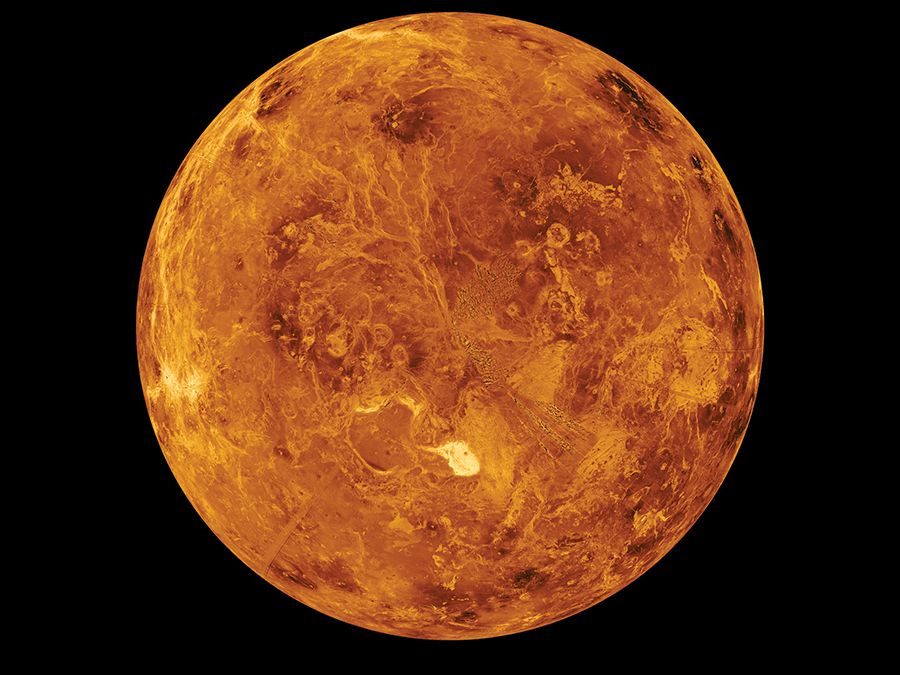

The first, the semantic argument, is easy to understand. Sometimes, people are able to refer to someone without having a unique description. For instance, many people know that Richard Feynman is a famous physicist, but don’t know much else about him. However, “famous physicist” is hardly a unique descriptor—they might apply the same description to Einstein or Bohr. You can’t replace Richard Feynman with “famous physicist” and know exactly who you are talking about.

The second, the epistemic argument, concerns what information can be known a priori. For instance, I can know that “the greatest philosopher from antiquity is the greatest philosopher from antiquity,” without having any other information about the world (the argument is in the form A = A, and such simple identity statements are knowable a priori). It is also knowable a priori that “Aristotle is Aristotle,” for the same reason. However, it is not knowable a priori that “Aristotle is the greatest philosopher from antiquity.” This statement requires some further evidence or engagement with the world. Because the description and the name are not interchangeable here, they cannot be equivalent to each other.

The last argument, the modal argument, requires understanding Kripke’s concept of rigid designation. Essentially, a rigid designator is something which designates the same object across all possible worlds. The description “President of the United States in 2001” is not a rigid designator, for we can easily imagine a state of affairs in which Florida used normal ballots and Gore won. “Al Gore,” on the other hand, is a rigid designator. He might have been called something else, he might not have entered politics, but he would still be the same guy. This example shows the intuition behind Kripke’s modal argument—while descriptions are not (usually) rigid, and can designate different entities in different possible worlds, names are rigid. Thus, they cannot be equivalent.

To replace descriptivism, Kripke posits the causal theory of reference. This is similar to Mill’s theory, except it now includes a “causal chain of reference.” There is an “initial baptism” event, in which a name becomes a rigid designator of an object. Then, that name gets passed down to others in a causal sequence of events. For instance, consider a baby, whose parents name her Alice. Bob, a friend of the family, does not know the baby’s name is Jane, for he was not at the initial baptism event. However, Jane’s mom, who was there, informs her of Jane’s name. Then, Bob can inform his friends of Jane’s existence.

While this theory seems more plausible, it also leaves many questions hanging. For instance, how does each user pass on the name on to the next and refer to the “right” individual each time? Imagine I have a pet aardvark named “Socrates.” When I discuss Socrates, how do my friends know which one I am telling them about?

Moreover, there are paradoxes that both theories of reference run into. For instance, consider a case in which someone knows they live next to someone named Robert Galbraith. They don’t know that Robert Galbraith is the pen name of J.K. Rowling. So, they would agree to both these sentences:

J.K. Rowling has writing talent

Robert Galbraith has no writing talent

But, because the names refer to the same person, you should be able to replace one with another—leading to the speaker believing both “J.K. Rowling has writing talent” and “J.K. Rowling has no writing talent.”

Or, consider a case in which a French speaker named Pierre sees a picture of a town named Londrés, and believes it is pretty. He would agree to the sentence, “Londres est jolie.” However, years later, he moves to London, and lives in an ugly part of the city. He would agree with the sentence, “London is not pretty.” Translation should not affect the contents of a sentence, so, Pierre simultaneously would believe “London is pretty” and “London is not pretty.” While this may seem like a far-fetched example, this very thought experiment has kept philosophers up at night, including Kripke—who essentially declared defeat. He writes, “Hard cases make bad law,” implying these seemingly irresolvable edge cases are irrelevant to his larger picture.

Philosophers have spilled many words trying to fix these theories to make sense of these puzzles, but to me, their efforts come off as… kludgey. In the end, maybe they are right.

But I have a different theory—one that is more elegant, consistent with evidence from AI, developmental psychology, and cognitive science, and inclusive of those hard cases.

Humans arrive into the world in a state of uncertainty. We know nothing for sure, except, as Descartes famously said, that we exist. Immediately, we encounter a deluge of sensory information: diverse sights, sounds, feelings, smells, tases. Our brains must learn to sort this noisy data into something sensible and regular, lest the world constantly overwhelm our limited machinery.

Psychologist Lisa Barrett Freedman, in her brilliant book How Emotions Are Made, argues that humans divide the world into concepts to deal with this unfathomable complexity. Concepts are mental representations of some set of objects that display some commonality or pattern among them. They are what allow us to recognize tables as tables, or the color red as red. Concepts are extensible and modular—we can combine concepts to create new ones. The concept of a vehicle, for example, depends on the concepts of car, truck, bike, and so on. Freedman’s book argues that common emotions, like fear, anger, and love, are learned concepts like any other, rather than innate or natural bodily reactions.

In most cases, concepts are difficult to produce exact definitions for. Even for something as simple as a chair, it is troublesome pinning down exactly what we mean. We might define a chair, for example, as “a four-legged object that humans sit on.” But a three-legged chair is still a chair, and a couch is also four-legged. Instead, as Freedman argues, humans use statistical learning to predict similarities between different instances of a concept. Instead of a having a strict definition for chair—or human, or bird, or whatever—we have learned that there is a cluster of things that have similar properties.

This view is close to what Eliezer Yudkowsky discusses in a series of LessWrong posts about language. His concept of “similarity clusters” resembles Freedman’s concept of, well, concepts. As he writes, “A dictionary is best thought of, not as a book of Aristotelian class definitions, but a book of hints for matching verbal labels to similarity clusters, or matching labels to properties that are useful in distinguishing similarity clusters.”

This method of statistical learning of concepts is also similar to how modern machine learning (ML) works. Previous attempts at creating artificial intelligence—like expert systems and “Good Old Fashioned AI” (GOFAI)—largely failed because it is exceedingly difficult to explicitly define explicit rules for concepts and behaviors. ML achieved success by relying instead on probabilities and including uncertainty. Taking in a large corpus of data, ML is able to statistically learn common features of a concept. There might be no essence to the visual presentation of the number 5, yet artificial neural networks still identify different variations of 5 to a high degree just through probabilistic learning and updating. Our brains probably work similarly; it is cheaper and more flexible to rely on probabilities compared to rules.

This is interesting and all, but you might ask: how is it at all relevant to our study of naming?

Well, proper names are a subset of concepts. London is a concept, and Aristotle is a concept. Based on the evidence discussed above, our brains probably evolved a complex machinery to categorize, probabilistically identify, and infer properties of the objects we encounter in the world—why would this not work on names? Why would we have a different architecture for this one, hyper-specific task, when the existing framework is flexible enough to function perfectly well?

To make this model more clear, I think this is probably what goes on when we name something:

We encounter an unfamiliar person, place, or organization. Our brain then creates an empty new concept for that person/place/object in our minds. This is analogous to instantiating a class in object-oriented programming. Our brain then creates a label for that concept with whatever name (or names) we hear associated with it.

The brain populates the concept with properties, based on whatever it’s learned about it. For example, the concept for Aristotle might include properties like “teacher of Socrates,” “author of a book called Politics,” and so on. Probabilities are involved here (e.g., I’m 99% confident Aristotle wrote Politics, given there’s no real evidence to believe otherwise, but I’m only 85% confident William Shakespeare wrote his works, given the controversy among historians regarding the authorship of those plays.)

Concepts inherit properties of parent concepts. For example, Aristotle extends the properties for the Famous Old Greek Dudes concept and the Human concept.

Concepts are flexible. When we learn new information about a concept, we can merge it with other concepts (e.g., learning that Hesperus is the same concept as Phosphorus) or add new properties or labels.

Sometimes, properties are necessary conditions. This results from axioms we accept. For example, accepting the axiom from biology that a species is a group of animals which can produce viable offspring, it has to be true that a Golden Retriever and a chihuahua are both members of the concept Canis lupus familiaris, despite their other properties being so different.

When we communicate about concepts with another person, we are aiming to “link” our internal concept symbols to match. For instance, when news reporters communicated the winner of the election in 2020, they aimed to update my “Joe Biden” concept to have property “President of the United States.”

Now, our brain has sufficient information to probabilistically identify what name goes with an object we encounter, and infer the properties of an object we know the name of. To illustrate examples of both processes:

Identification: I hear someone talking about “some Ancient Greek dude.” Multiple concepts in my conceptual set possess this property, and they are roughly equally famous. Let’s say the set is [Aristotle, Plato, Socrates, Alexander the Great], and I am ~25% certain about the referred person being each of them. They then mention that they read a book by this Ancient Greek dude. Now, my set includes all famous Ancient Greek dudes I know who have written a book. So, [Aristotle, Plato], with ~50% confidence for each. Then they say the person’s name: Aristotle. Now, I am 99.99% confident they are referring to the person who existed in Ancient Greece, wrote philosophy books, and went by Aristotle, given the information I know about this concept. I am not 100% confident—there could always be other possibilities (maybe Aristotle didn’t exist; maybe maybe there’s another Ancient Greek philosopher Aristotle they are referring to; maybe I live in a simulation, etc.)

Inference: When I hear someone discussing Aristotle and his poetics, I can be something like 99% confident they are discussing a philosopher who (very probably) lived in Ancient Greece and (very probably) taught Plato, and not someone’s pet armadillo Aristotle. I know this because of:

The base rate of fame of the Ancient Greek Aristotle; he is much more likely to be discussed than those other people called Aristotle, and

I know that the Ancient Greek Aristotle has property “Philosopher”—thus, if someone is discussing his poetics, that updates my probability towards them discussing the Ancient Greek guy Aristotle.

In this view, descriptions do not fix the reference of a name, but are properties of concepts that bear that name as a label. These properties are useful in identifying what object a name refers to—but they are not equivalent to the object. This is a probabilistic process. Unless something is definitionally true (like a dog being a member of Canis lupus familiaris, according to the axioms of biology I’ve internalized), I am never 100% certain the name refers to the object I am thinking of.

Let’s run through a few cases that gave the previous theories of language trouble, and see how this theory addresses them:

Modal Argument and Aristotle

In a different world, Aristotle could have been a farmer instead of taking up philosophy, but he would still be Aristotle. This argument cuts against descriptivism, as the description “greatest philosopher of antiquity” does not refer to Aristotle in all possible worlds, while Aristotle does.

In this view, we can reframe the description and the name as being conditions in a probability statement. In this world, we have learned that Aristotle (probably) possesses the property “greatest philosopher of antiquity.” Thus, when given that description, we are essentially computing the probability P [x = Aristotle | “greatest philosopher of antiquity”], resulting in some high number close to 1. Kripke, meanwhile, asks us to compute the probability P [x = Aristotle | (x = Aristotle & x = farmer)]. This simplifies to P [x = Aristotle | x = Aristotle], or P [x | x], which will always be 1. If we weren’t given the information, and were instead asked who some random Greek farmer in a different world is, our probability for the person being Aristotle if he never took up philosophy would be pretty low.

Descriptions are conditions in probability statements. Rigid designation is a probability statement where the condition is being the object is itself.

Epistemic/Semantic Argument

The epistemic argument does not really apply to this theory; descriptions are not equated with names, and so it does not matter if one can be known a priori.

The semantic argument also does not work against it. We can restate Kripke’s Richard Feynman example as someone making a new mental concept that has the name label being “Richard Feynman” and career being “famous physicist.” Assume the only other member of the set “famous physicist” is Einstein. When this person encounters some entity with the description “famous physicist,” they know with 0.5 probability that the entity’s name is Richard Feynman. This view does not claim that the same descriptions have necessarily unique referents—multiple concepts can possess the same properties, but different symbols.

J.K. Rowling

In this case, the speaker’s brains has created two symbols: “J.K. Rowling” and “Robert Galbraith,” which contain different properties, and are not linked. Of course, we, as external observers, know that those two labels (probably) refer to the same entity—for our concepts have been linked. However, this fact has not yet been communicated to this speaker. In his perspective, he is being completely consistent and rational by asserting that “J.K. Rowling is a writer” and “Robert Galbraith is not a writer.” He would not agree with the statement “J.K. Rowling is Robert Galbraith," for his internal concepts are not aligned with ours.

A similar thing occurs with the Frenchman Pierre. He has two symbols in his mind, one for Londres and one for London, each possessing different properties. Once he learns that both are the same, he can update the properties of the concepts.

Water

This isn’t directly related to proper names, but is adjacent. There is a thought experiment, posed by Hilary Putnam, involving a Twin Earth, where there is a substance that tastes like water, looks like water, and is called “water” by its inhabitants, but has a different chemical composition than H2O (call it “XYZ”). Is it still water?

This theory makes it clearer what is going on here. Usually, when trying to decide whether something is water, we take into account characteristics like transparency, taste, etc. However, under the axioms of chemistry we have adopted, the only essential property that water must possess is the chemical formula H2O. This is an arbitrary decision—we can imagine another Twin Earth, in which we privilege boiling point as the relevant necessary property for an object being water. But, in this world, because we define water as H2O, the probability that something is water, given it does not have the chemical composition H2O is, well, 0. When we don’t know this information, but are given that the object is a transparent liquid that tastes neutral and has boiling point 100 degrees Celsius, the probability this object is water approaches, but does not reach, 100%.

Naming is a deceptively simple act. Philosophers have attempted to explain names by equating them with descriptions, or elaborate games of telephone, but their approaches thus far have led to failure. I argue that names instead are probabilistic guesses that an object possesses a set of properties, aligning with mental concepts we create.

The work that existing philosophers have done on this topic is important, and interesting—Kripke, in particular, is brilliant, and his concepts have transformed related fields, like philosophy of mind and metaphysics. I encourage readers to read his work.

Of course, I might be wrong, or have the details incorrect here. This is a high-level, informal overview of what I think is going on; it is not meant to be technically detailed and air-proof (I think the examples towards the end especially need to be developed more). Rather than being a conclusive theory, I think of this as a sketch of a possible, alternative perspective on naming that the literature thus far hasn’t come across (to the best of my knowledge). I invite others to expand upon this—or tear it to shreds, if I’m wrong.